By Sam Schechner and Valentina Pop

BRUSSELS -- An alliance of election officials, tech companies

and social-media researchers are stepping up efforts to thwart

attempts at interference by hackers and peddlers of disinformation

to skew European elections in May.

Stakes are unusually high in these elections, which come as

Britain's future in the European Union remains uncertain, nativism

and nationalism are roiling EU politics and external forces

including foreign meddling, migration and trade threaten more

destabilization.

Since 2016, when Russia worked to sway voters in the U.S.

presidential election by using fake social-media accounts on

Facebook Inc. and Twitter Inc., researchers and government

officials have sounded alarms and tried to head off similar

techniques in elections world-wide.

Policing the European Parliament elections, which will span 24

languages in at least 27 countries to elect more than 700 members,

poses one of the greatest challenges yet, election officials and

tech executives say. Monitoring so many votes is logistically

complex, and new actors -- both domestic and foreign -- are using

Russia's playbook to foment discord.

"These elections present a tempting target for those who wish to

sow dissent and disrupt our democratic debate," said Julian King,

the EU's security commissioner.

Russian state-backed hackers dubbed APT28 and Sandworm Team

since last year have been targeting EU governments and other groups

with a view toward these elections, according to cybersecurity

specialists FireEye Inc.

Now, EU officials are coordinating to counter election

interference. Until recently, the bloc's main weapon against

disinformation was a Twitter account, EU Mythbusters, with 41,000

followers on a continent of 500 million people.

The EU recently doubled its annual budget for a task force

fighting online disinformation. Since January, an EU network of

national election authorities, cybersecurity and data-protection

experts meets monthly and has set up a "rapid alert system" on

disinformation, says Christian Wiegand, a spokesman for the

European Commission, the EU's executive arm.

But the EU's annual spending of several hundred million euros on

cybersecurity and countering disinformation pales compared with

Washington's multibillion budget earmarked for this year, EU

auditors say. National governments in Europe all have individual

cybersecurity budgets, but there is no clear overview, and EU

spending is so scattered that "we don't know exactly how much is

being spent," said auditor Baudilio Tome Muguruza. "Fragmentation

points to a coordination problem that can be exploited by

cyberattackers."

Major tech companies have pledged to better slow the spread of

election-related disinformation. A central focus has been adding

transparency to ads that are categorized as political, allowing

individuals and researchers to see who paid for them. Twitter

earlier this month began showing EU campaign ads in a global

repository of political ads that it publishes.

Facebook late Thursday expanded its library of political ads to

cover the EU as well, and added a computer interface called an API

to allow researchers to access it more efficiently. The company

says it will start blocking political ads in EU countries in

mid-April unless advertisers register with Facebook, which says it

will verify their identities and check that they are located in the

countries where they are advertising.

But researchers say paid ads represent only part of the problem,

because disinformation campaigns are often conducted through

nonpaid sharing of false or misleading news articles, memes and

other content that is harder to detect.

Cleaning up paid political ads is "low-hanging fruit in terms of

transparency and regulation," said Chloe Colliver, who heads the

digital-research unit of the Institute for Strategic Dialogue, a

London think tank that is monitoring campaigns in Germany, France,

Italy, Spain and Poland. Ads, she said, are "just the tip of the

iceberg."

Tech companies are also improving how they detect networks of

fake accounts used to distribute misleading electoral information.

Facebook recently removed networks of accounts in several

countries, including the U.K. and Belgium, the company says.

But EU officials say the companies have failed to take down fake

accounts and correct disinformation that was spread on their

platforms and didn't grant fact-checkers access to data in real

time. Facebook said in its fourth-quarter earnings that it

estimates false accounts represented about 5% of the 2.32 billion

users worldwide who connected to Facebook at least once in the last

30 days of the quarter -- or some 116 million accounts.

"We'd still like to see greater action against fake accounts and

bots," said Mr. King. "There is still a long way to go, and the

clock is ticking." he said.

A Facebook spokeswoman says the company is making progress in

removing fake accounts, "which we believe are the source of the

vast majority of fake news." Facebook removed 1.5 billion fake

accounts in the six months between April and September of 2018,

most within minutes of being created, she said.

Complicating efforts is the metastasis of disinformation, which

has become much more local. "Domestic actors are now a much bigger

threat than Russia," said Clint Watts, a fellow at the Foreign

Policy Research Institute in Washington.

Disinformation is also shifting from primarily being on Twitter

and Facebook to other platforms -- such as Facebook-owned

Instagram. The social network is popular for sharing visual

disinformation -- such as an image of an event with a false caption

-- that doesn't trip automated filters, researchers say.

Twitter says it has increased its efforts to weed out fake

accounts and malicious actors, as well as publishing archives of

past disinformation campaigns for researchers to examine.

Facebook says Instagram is included in its election-integrity

efforts, and has highlighted the numbers of Instagram accounts it

has closed in cases of what it calls "coordinated inauthentic

activity."

Architects of disinformation campaigns also increasingly find

content posted by real people or activists that they can spread,

rather than creating their own content and using fake personas to

spread it, researchers say.

"There is a ready supply of nasty stuff being produced by

genuine people, " Ms. Colliver said.

--Daniel Michaels contributed to this article.

Write to Sam Schechner at sam.schechner@wsj.com and Valentina

Pop at valentina.pop@wsj.com

(END) Dow Jones Newswires

March 29, 2019 07:14 ET (11:14 GMT)

Copyright (c) 2019 Dow Jones & Company, Inc.

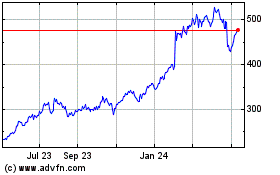

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Mar 2024 to Apr 2024

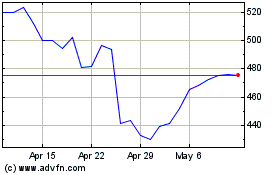

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2023 to Apr 2024