By Zeke Turner

This article is being republished as part of our daily

reproduction of WSJ.com articles that also appeared in the U.S.

print edition of The Wall Street Journal (January 11, 2018).

BERLIN -- Germany has gone live with one of the most onerous

laws aimed at forcing Facebook Inc., Twitter Inc. and YouTube to

police content on their platforms.

The verdict after 10 days in effect? It's complicated.

Since Jan. 1, technology companies face fines of up to EUR50

million ($60 million) if they fail to delete illegal content on

their platforms, ranging from slander and libel to neo-Nazi

propaganda and calls to violence. The law applies to most

social-media networks in Germany.

The banned content was always illegal. What's new is that social

networks with more than two million users in Germany now are

responsible for cleaning it up themselves.

The new law pushes U.S.-based social-media platforms in Germany

one step closer to the level of responsibility that newspapers and

media here have long faced -- a level far higher than what the

platforms have faced domestically. Under U.S. law, tech platforms

aren't liable for user content shared on their services.

Many of the Silicon Valley giants affected by the new rules have

already pushed back publicly in Germany. The law has also raised

alarm among free-speech watchdogs and legal experts.

"In a democracy, it has to be a state organization that enforces

the law, " said Dieter Frey, a lawyer and media expert in

Cologne.

Social-media companies typically rely on software and a mix of

in-house and third-party content moderators, who sift through posts

users have flagged as problematic and delete those that violate

local law. In certain cases, the companies' legal teams jump in.

The law often has companies working under time pressure to

determine whether a post breaches one of 24 paragraphs of the

criminal code.

Ahead of the new law, Facebook contracted with providers for

1,200 moderators in Germany, a number that compares with 7,500

moderators world-wide. Just 1.5% of Facebook's 2.07 billion monthly

users world-wide are based in Germany.

Facing increased pressure after the U.S. election and terror

attacks around the globe, tech companies have taken some voluntary

steps to monitor the massive amount of content on their platforms.

Facebook, for instance, is figuring out how to fully monitor and

analyze the more than one million user reports of potentially

objectionable content that it says it receives every day.

At YouTube, a unit of Alphabet Inc.'s Google where users watch

more than a billion hours of video a day, the company has used both

software and humans to screen for content that warrants removal,

such as extremist videos. In the U.S., Google has said it instructs

human reviewers to mark violent or hateful content as low quality,

which will likely move such sites lower in Google search results.

Twitter has been using internal technology to flag accounts that

promote terrorism.

Following rules can pose practical difficulties, as companies

have found in the first 10 days Germany's new law has been in

effect.

This month, Twitter temporarily suspended the account of a

German satire magazine, Titanic, demanding that the magazine delete

a parody tweet mocking a German nationalist lawmaker, Beatrix von

Storch.

Tim Wolff, Titanic's editor-in-chief, said Twitter notified him

of the suspension by email on Tuesday, Jan. 2, and asked him to

delete the tweet. Later, other users piled on and flagged four

other tweets on Titanic's account, including one that made fun of

German police and another about Austria's Chancellor Sebastian

Kurz, whom Titanic has called "Baby Hitler" and said should be

killed.

Twitter asked Mr. Wolff to delete those four tweets as well.

Then, after an internal review that included input from legal

professionals on its support team in Germany, Twitter dropped that

request and reversed Titanic's suspension. The tweet about Ms. von

Storch remains offline.

Mr. Wolff said, "Our suggestion is to let us at Titanic decide

what is satire and what isn't."

In another case, on December 22, before the law had taken full

effect, Facebook blocked the account of Mike Samuel Delberg, a

28-year-old political representative of Berlin's Jewish community,

after he posted a video of an Israeli restaurant owner in Berlin

being threatened on the street.

In a false positive, Facebook content monitors thought the video

violated the company's community standards. "It went viral," Mr.

Delberg said. Then "all of a sudden Facebook deleted the video and

blocked me and said that I broke their guidelines." The company has

since apologized to Mr. Delberg for deleting the video and he is

back online.

"It can't be true that...while raising awareness in public of

anti-Semitism, an account gets deleted," Mr. Delberg said.

"We should not be the ones who judge if a post is illegal or

not," said Semjon Rens, Facebook's public-policy manager in

Germany. "This is the responsibility of courts," he said, adding

Facebook is "working hard to put the right processes in place and

to comply."

A spokesman for Google's YouTube said it would "continue to

invest heavily in teams and technology" to be able to remove

"content that breaks our rules or German law" more quickly.

A representative for Twitter declined to comment on the record

or to disclose the size of the team it employs to review content on

its site but did explain the company's views.

German enforcement officials are still finding their way. Ulf

Willuhn, a senior public prosecutor in Cologne, spent the beginning

of the year considering whether his office would have to pursue

legal action based on a tweet from an anti-immigrant lawmaker --

the real Ms. von Storch, not a parody this time -- who had referred

to the local Arabic-speaking community as "group rapists."

After some research, Mr. Willuhn decided his office wasn't

responsible. Separately, Twitter had already asked her to delete

her tweet.

"At the end of the day, ...it's not as easy as it seems at first

glance, " Mr. Willuhn said. "Yeah, it's very very difficult."

Write to Zeke Turner at Zeke.Turner@wsj.com

(END) Dow Jones Newswires

January 11, 2018 02:48 ET (07:48 GMT)

Copyright (c) 2018 Dow Jones & Company, Inc.

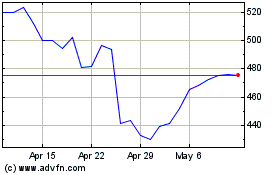

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2024 to May 2024

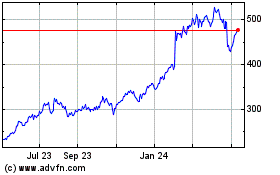

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From May 2023 to May 2024